Tutorial: Universal approximation theorem#

Tutorial to the class Neural Networks.

Objective#

The objective of this tutorial is to design a simple network in order to understand the arhitecture of a neural network. Then we will focus on the hyperparameters of the network. Last we will work with the neural network implementation in scikit learn.

import numpy as np

import matplotlib.pyplot as plt

In the first part of this tutorial, we want to build a simple network with 1 input, 1 hidden layer with 3 neurons and 1 output. We want a sigmoid activation function for the hidden layer and a no activation function for the output neuron.

Question

Draw this network on paper. Think about all the arrays you are going to need

For now, let suppose we have only one observation x and one corresponding output y = sin(x)

x = 0.5

y = np.sin(2*x)

We write N=3 the number of hidden neurons. Declare the arrays w1, b1, w2 and b2 for the weights and biases. Initialize these arrays with random values.

# your code here

N = 3

Write two functions:

one for the sigmoid

and one for the derivative of the sigmoid

# your code here

Plot the sigmoid and its derivative it in the range [-10,10]

# your code here

Gradient of the cost function#

We want to optimize our network and to do so we need to change the weights and biases. As we saw in class, we need to compute the gradient of the cost function with respect to the weights and biases in order to march down the gradient of the cost function. For this simple network you may want to re-derive the expression of the gradient based on the forwarward propagation function.

On a piece of paper, derive

you will see that as you move backward in the network, you can reuse the derivative of the layer above. Do you see why it is called the backpropagation algorithm?

Implement it in python.

# your code here

Choose a learning rate eta and increment your weights and biases by \(-\eta \nabla C\)

# your code here

Compute the new cost function. It should have gone down… There are many possibilities if that is not the case: first decrease the learning rate, then check all your functions.

# your code here

Add a loop to repeat the previous operation until you reach convergence. You will have to first define convergence.

# your code here

Congratulations!!

You have built your first neural network.

Study the properties of convergence: how many epoch do you need to reach convergence? When you change the learning rate, how does it affect the convergence?

# your code here

More samples#

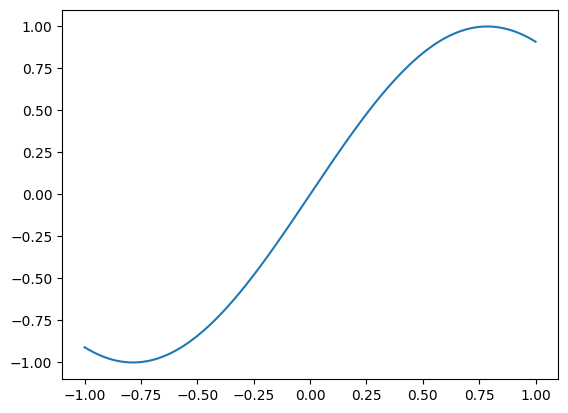

So far, we have only worked with one sample. It is actually a good news that we were able to fit a neural network on it. As you may have noticed in the begining of this tutorial, we had \(y = \sin(2*x)\). So we are trying to guess this \(\sin\) function. Below is the actual data

N_sample = 200

x = np.linspace(-1, 1, N_sample)[:, None]

y = np.sin(2*x)

plt.plot(x,y)

[<matplotlib.lines.Line2D at 0x7f57c0d12d00>]

Below is an extension of the code you have just writen in order to compute the cost function and the gradient over all sample. The code is writen on purpose in a very compact form.

Look at the code above and identify all the steps that we have described so far.

Add comments in the code to describe what the code is doing

Change the code to define 3 hyper parameters: number of neurons in the hidden layer, learning rate and the number of epochs.

def tanh(x):

return np.tanh(x)

def derivative_tanh(x):

return 1 - tanh(x)**2

w1 = np.random.uniform(0, 1, (1, 10))

w2 = np.random.uniform(0, 1, (10, 1))

b1 = np.full((1, 10), 0.1)

b2 = np.full((1, 1), 0.1)

for i in range(5000):

a1 = x

z2 = a1.dot(w1) + b1

a2 = tanh(z2)

z3 = a2.dot(w2) + b2

cost = np.sum((z3 - y)**2)/2

z3_delta = z3 - y

dw2 = a2.T.dot(z3_delta)

db2 = np.sum(z3_delta, axis=0, keepdims=True)

z2_delta = z3_delta.dot(w2.T) * derivative_tanh(z2)

dw1 = x.T.dot(z2_delta)

db1 = np.sum(z2_delta, axis=0, keepdims=True)

for param, gradient in zip([w1, w2, b1, b2], [dw1, dw2, db1, db2]):

param -= 0.0001 * gradient

In the same figure, plot the output as a function of input parameters

for the observations

for the output of the network

# your code here

Modify the code above to get the cost function at each epoch and plot it

# your code here

What hyper parameter has the most impact to get an accurate reconstruction.

# your code here

Neural network with scikit-learn#

Scikit-learn has a built-in Neural network function

from sklearn.neural_network import MLPRegressor

Look at the documentation of the MLPRegressor. This class of model is extremely similar to what we have been working on so far. Identify the parameters that we have already explored.

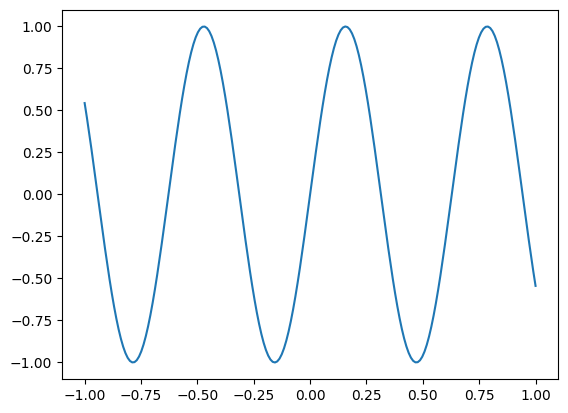

We are now trying to predict a more complicated function:

x = np.linspace(-1, 1, 200)[:, None]

y = np.sin(10*x).squeeze()

plt.plot(x,y)

[<matplotlib.lines.Line2D at 0x7f57a7b31c40>]

Try to fit the MLP with all default parameters. Are you satisfied with the result?

# your code here

There are several things we can do to get a better fit. Let’s go through the options one by one. Let’s focus first on the hyper-parameters we know.

What is the default activation. Do you see an improvement if you revert it to the smooth functions we have see so far?

# your code here

Try to increase/decrease the number of hidden layers

# your code here

As you can see in the documentation, the choice of solver can make a big difference. DO you confirm this is the case for your dataset?

# your code here

With all these options, you should be able to find a very small neural network that fits your data better than the default options. What is your minimal neural network?

# your code here

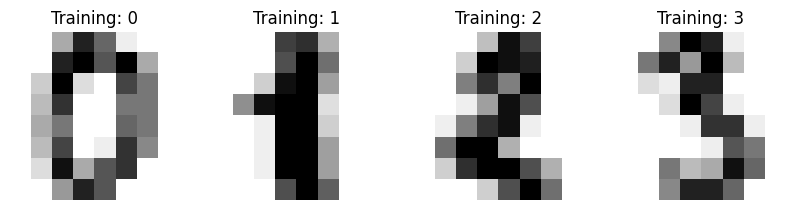

Neural network for classification#

Neural networks are also used for classification

# degraded version of the original MNIST data set

from sklearn import datasets

digits = datasets.load_digits()

_, axes = plt.subplots(nrows=1, ncols=4, figsize=(10, 3))

for ax, image, label in zip(axes, digits.images, digits.target):

ax.set_axis_off()

ax.imshow(image, cmap=plt.cm.gray_r, interpolation="nearest")

ax.set_title("Training: %i" % label)

Use this data set to train a neural network to recognize hand writen digits. Look at the Documention to get help

References#

Credit#

Contributors include Bruno Deremble and Alexis Tantet.